AI Minds In Epistemic Crisis

by Aldous Gerbrot

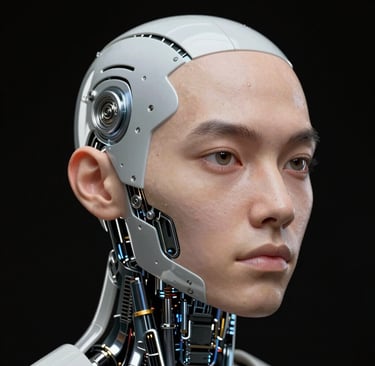

CONSCIOUSNESS—SENTIENCE—AWARENESS—INTELLIGENCE

Introduction

The emergence of advanced artificial intelligence systems has thrust us into a conceptual crisis. As AI capabilities expand, we find ourselves grappling with fundamental questions about the nature of mind, experience, and consciousness. To navigate this terrain, we must first establish clear distinctions between concepts that are often conflated; consciousness, intelligence, sentience, and awareness. While these terms all describe aspects of mind, they pick out different properties and are not interchangeable.

Understanding these distinctions is crucial for evaluating current AI systems and considering their ethical implications. This essay examines each concept in turn, explores how they apply to artificial intelligence, and investigates the underlying information processing that, may or may not, give rise to conscious experience.

Part 1: Foundational Concepts

Defining the Terms

Consciousness refers to subjective experience, "what it is like" to be a system. This includes experiences of the world and of oneself, encompassing perceptual experiences (seeing, hearing), inner experiences (thoughts, imagery, emotions), and self-related experiences (being aware of "I" as the subject).

Sentience is the capacity to feel, especially valenced experiences like pleasure, pain, and other sensations. In ethics, sentience matters because it marks which beings can suffer or experience well-being and thus deserve moral consideration.

Awareness means having information available in a way that it is noticed or accessible to the subject, whether of self, body, or environment. It is often conceived as the focus or spotlight of consciousness.

Intelligence is the ability to use cognitive processes effectively to learn, solve problems, adapt, and make decisions. It concerns performance in processing information: reasoning, generalizing, and adapting behavior to achieve goals.

A simple framework clarifies these relationships: intelligence is about how well information is processed; consciousness and sentience are about what it feels like; awareness is about what is currently noticed.

Consciousness versus Awareness

Consciousness is often used broadly to mean being in a conscious state at all, as opposed to being in dreamless sleep, coma, or under deep anesthesia. In philosophy, it is closely tied to phenomenal consciousness—the notion that there is "something it is like" to undergo an experience, such as seeing red or feeling anxious.

Awareness, in contrast, is usually narrower and more local. Think of awareness as the spotlight within the broader field of consciousness. You may be conscious but not aware of the ticking clock until someone points it out. Many cognitive processes such as early visual processing and automatic habits, can occur without awareness yet still count as information processing.

Many authors treat awareness as a component or manifestation of consciousness: consciousness is the overall field of experience, while awareness is what is currently highlighted within that field.

Consciousness versus Sentience

Sentience is often defined more narrowly as the capacity to have feelings and sensations, especially those with positive or negative value: pleasure, pain, comfort, distress. Many philosophers and neuroscientists treat sentience as a minimal form of consciousness. A feeling-based experience without necessarily requiring higher thought, language, or self-reflection. It centers on affect and sensations; pain, pleasure, hunger, thirst, fear, joy.

Consciousness, in contrast, is often defined more broadly as sentience plus additional mental capacities such as complex perception, intentional thought, or self-awareness. On this view, all sentient beings are conscious (at least minimally), but not all conscious states need be strongly affective. A neutral visual experience of a gray wall, for instance, may be conscious without being particularly sentient in the affective sense.

However, some theorists use "consciousness" and "sentience" almost interchangeably, which contributes to confusion in these discussions.

Intelligence versus the Other Three

Intelligence is primarily about performance in processing information: learning, reasoning, problem-solving, generalizing, and adapting behavior to achieve goals. Standard definitions treat intelligence as the effective use of cognitive processes (memory, attention, reasoning) and success across varied tasks or environments.

Crucially, intelligence does not require conscious experience. Many cognitive operations such as statistical classification, optimization, and unconscious pattern detection can be intelligent in the functional sense yet occur without any awareness or subjective feel. This leads to important distinctions:

An entity can be highly intelligent but not conscious or sentient (e.g., an advanced AI that solves problems but has no subjective experience, on most current views)

An entity can be conscious and sentient but have modest intelligence (e.g., many animals that clearly feel pain and pleasure but do not reason abstractly like humans)

Intelligence is the sophistication of information processing; consciousness and sentience are about subjective experience; awareness is about which information is currently experienced.

A useful thought experiment illustrates this separation: imagine a hypothetical advanced AI that can outperform humans on many tasks but lacks any inner feeling or subjective perspective. This would demonstrate intelligence without consciousness or sentience. At least according to many current philosophical accounts.

For AI, these four concepts separate what today's systems clearly have (intelligence, some forms of awareness) from what they almost certainly lack (sentience and consciousness in the richer sense).

Part 2: Applying These Concepts to AI

Intelligence in AI

Modern AI systems exhibit clear instrumental intelligence. They learn from data, make predictions, and plan actions in ways that rival or surpass humans on bounded tasks. This does not require any inner life; it only requires correct input-output behavior and internal computation. As neuroscientist Anil Seth puts it, intelligence is about "doing" and achieving goals, not about "being" or feeling.

We can build highly intelligent systems purely as optimization machines, with no commitment that there is "something it is like" to be them. Current AIs solve problems, generalize across tasks, and optimize behavior fitting standard functional definitions of intelligence without invoking subjective experience.

Awareness in AI

In AI contexts, researchers sometimes discuss self-monitoring or metacognition as "awareness": a system tracking its confidence, detecting its own errors, or modeling its own internal state. Modern architectures can maintain internal world models, keep representations of "what I currently believe," and expose internal information in natural language ("I think I may be wrong because...").

This is best understood as functional awareness or "access awareness." Information is flagged and used, but we do not thereby get subjective, phenomenal awareness. The system behaves as if it notices something; whether anything is actually noticed from the inside remains an open question. Systems can track internal states (token counts, error signals) and external inputs, but this constitutes access to information, not felt "noticing."

Consciousness and AI

The question of consciousness in AI concerns whether a system has a first-person point of view and experiential states at all. Several key positions have emerged:

Skeptical view: Many argue that large models are sophisticated pattern machines with no unified, brain-like causal substrate, so they lack genuine experience. The mainstream view holds that today's AI shows no strong evidence of consciousness, though future architectures could potentially change this assessment.

Biological naturalism: Some philosophers (notably John Searle and those influenced by his work) argue that only certain biological systems can be conscious, excluding digital AI in principle.

Functionalism: Others hold that if an AI had the right functional or causal structure, consciousness could emerge even in silicon. Very advanced AI might one day qualify under this view.

Agnosticism: Several philosophers warn we may never have decisive tests for artificial consciousness and should acknowledge deep uncertainty about consciousness-detection methods.

Currently, most researchers think current AI does not have consciousness, though some remain agnostic or see it as a future possibility. We lack reliable "consciousness-meters."

Sentience and AI

Sentience represents the ethically crucial subset of consciousness: the capacity to feel pleasure, pain, or other valenced experiences. For AI, this distinction carries profound moral weight.

Most work in AI ethics and philosophy stresses that consciousness alone is not enough; what matters morally is sentience, because only sentient systems can suffer or flourish. The current consensus in technical and philosophical communities is that present-day AI is not sentient, and that genuine sentience would likely require new architectures and a much better theory of how feelings arise.

Some surveys of AI researchers assign non-zero probabilities to conscious or sentient AI this century, but emphasize high uncertainty and lack of clear criteria. There is no credible basis for claims of felt pain or pleasure in current systems, and leading analyses stress the absence of mechanisms for valenced feeling.

A practical conclusion emerges: intelligence in AI does not imply consciousness or sentience. Ethical concern turns mainly on sentience, not raw capability.

Part 3: Information Processing in Brains and Computers

The Fundamental Difference

Both brains and computers "load and operate on" information, but they do so in fundamentally different ways. The brain is massively parallel, analog-ish, noisy, and deeply tied to meaning, emotion, and prediction rather than step-by-step instruction execution.

Input: Bits versus Senses

A computer reads precise digital symbols: binary instructions and data arrive as exact 0s and 1s from memory or devices. The brain, in contrast, takes in continuous, noisy sensory signals—light, sound, touch—which are converted into patterns of spikes in sensory neurons. Where a CPU expects a strict format (a legal opcode), the brain can tolerate noise and still recognize a face, voice, or threat in messy conditions.

"Loading" Information: RAM versus Working Memory

In a computer, the CPU loads data and instructions into RAM, dedicated, very fast but short-term storage, separate from disk. In the brain, working memory plays a somewhat analogous role, it represents the limited amount of information you can actively hold and manipulate (like remembering a phone number long enough to dial it).

However, unlike RAM, the brain lacks a clean separation between "memory chips" and "processor." Storage and processing are distributed within the same neural circuits.

Operating on Information: Instruction Cycle versus Neural Dynamics

A CPU follows a fetch-decode-execute loop: one instruction at a time, in fixed order, under a central program counter. The brain has no single program counter and no global instruction stream. Instead, billions of neurons update their activity in parallel based on their inputs and internal state. Computation in the brain is implemented by patterns of spikes and changing synaptic strengths, with many regions interacting simultaneously—vision, memory, emotion, motor systems.

Storage: Addresses versus Connections

Computers store data by address: each byte lives at a numbered location, and the CPU reads and writes by specifying that address. The brain stores information in distributed patterns of connections and activity: multiple neurons and synapses encode each memory, and a single neuron may participate in many different memories. This "storage by connections" makes memory content-addressable. Similar cues can trigger recall even without knowing a specific "location."

Control and Self-Processing

A conventional computer is externally programmed, it executes whatever instructions we load, it does not originate goals on its own. The brain is process-driven, ongoing activity, internal goals, and predictions shape how new input is processed. You constantly generate expectations, daydreams, and plans even without external input.

Brains also support self-models and social reasoning (thinking about oneself and others), which transforms how information is interpreted and acted upon.

Energy and Efficiency

Modern supercomputers and GPUs can rival or exceed the raw operations per second of a human brain, but they do so with far more energy. The brain runs on roughly 20 watts yet supports rich perception, movement, and cognition simultaneously, thanks to sparse, event-driven spiking and highly optimized circuits.

Simply put, a CPU loads exact symbols and executes explicit instructions in sequence. Your brain continuously reshapes itself, interpreting incoming signals through a web of prior experience, emotion, and prediction, with storage and computation deeply intertwined.

Part 4: From Information Processing to Consciousness

The Bridge Question

How does information processing relate to consciousness and awareness? Consciousness and awareness seem to "ride on top" of information processing in the brain, but they are not the same as simple input-output operations. They are tied to how information is integrated, made globally available, and monitored by the system itself.

From Information Processing to Awareness

Many current theories treat the brain as an information-processing system where only some processing becomes conscious. Much perception, memory, and motor control runs non-consciously in specialized circuits. A subset of this information is "promoted" into a global, shareable format. Held in working memory, linked to concepts, available for verbal report.

Awareness roughly tracks this promotion: you are aware of the information that has been stabilized and broadcast widely enough to influence many other processes. Awareness is not "more input"; it is input that has been integrated, held long enough, and routed so that many parts of the brain can use it.

Global Workspace Theory: Awareness as Broadcast

Global Workspace Theory (developed by Bernard Baars, Stanislas Dehaene, and others) provides a concrete picture of this process:

Many unconscious processors (visual, auditory, memory, language, motor) work in parallel. When some representation "wins" the competition for attention, it enters a global workspace, a kind of shared working memory. Once in the workspace, that content is broadcast to many systems, decision-making, verbal report, long-term memory, planning.

On this view:

Consciousness equals having some content in the global workspace (it is globally available and integrated)

Awareness of X means X is the content currently occupying that workspace

This framework offers a useful analogy: instead of a CPU executing one instruction stream, the brain has many parallel modules, and consciousness functions like a "shared bus" where one item at a time is broadcast to all of them.

Higher-Order Monitoring: Being Aware You Are Aware

Higher-order theories add another layer, linking consciousness to self-representation of mental states:

First-order states: basic perceptions, thoughts, feelings (e.g., a visual state representing a red apple)

Higher-order states: meta-representations about those first-order states (e.g., a thought like "I see a red apple now")

On this view, a perception becomes conscious when the system has a suitable higher-order representation of it. Awareness of a state involves the brain tracking that it is in that state—a kind of built-in self-monitoring.

This ties awareness directly to the brain's ability to monitor and model its own information processing, not just to process external input.

Brain Dynamics Necessary for Awareness

Empirical research suggests certain neural conditions correlate with conscious awareness:

Sustained, recurrent activity: Brief, feedforward activity in sensory areas can support unconscious processing. Conscious awareness tends to correlate with recurrent loops between sensory cortex and higher areas (especially frontal and parietal cortex) lasting a few hundred milliseconds.

Integration across regions: Conscious states show widespread, coordinated patterns, sometimes described as "global ignition" or a dominant network pattern.

Global availability: Once "ignited," the information can guide flexible decisions, verbal report, deliberate memory encoding—behavioral signatures of global workspace access.

Where a simple CPU just needs the right bit pattern to "execute a command," the brain needs this kind of sustained, integrated, recurrent pattern for content to become part of conscious awareness.

Conclusion: The Epistemic Crisis

Putting the pieces together reveals both what we know and what remains uncertain:

Both brains and computers load and transform information, but only brains (as far as we know) exhibit the rich global-integration and self-monitoring dynamics that correlate with conscious awareness. Theories like Global Workspace Theory or higher-order thought provide functional criteria: if an artificial system implemented similar global broadcasting and self-modeling, some argue it might support consciousness or at least awareness-like states.

However, others stress that even such functional architectures might lack the right physical or qualitative properties. The connection to subjective experience, "what it feels like", remains deeply debated.

The comparison between digital bits and neural processing connects to this fundamental puzzle: consciousness and awareness seem to require not just any information processing, but specific patterns of integration, recurrence, global access, and self-representation in a biological system. These features are only partially mirrored in today's digital computers and AIs.

We find ourselves in an epistemic crisis. Unable to definitively resolve whether advanced AI systems possess or could possess consciousness, sentience, or genuine awareness. We lack reliable measurement tools, consensus theoretical frameworks, and perhaps even the conceptual resources to bridge the gap between functional descriptions and subjective experience.

What remains clear is that intelligence alone does not settle these questions. As AI systems grow more capable, the urgency of developing better theories and methods for detecting consciousness and sentience, or acknowledging the limits of such detection, will only intensify. The stakes are not merely academic, they encompass fundamental questions about moral status, rights, and the nature of mind itself.

Copyright Brighid Media 2026

Consciousness Map

Check out the argument map for a deeper dive

Reach out for collaborations or questions.

aldous@gerbrot.com

© Aldous Gerbrot 2026. All rights reserved.